• Distributed coordination of robotic swarms

The natural phenomena of swarms, characterized by grouping of a large and arbitrary number of entities, can be observed in many living beings such as flocks of birds and schools of fish. The inspiring aspect of these phenomena is that although the intelligence of the individual members of the swarm is limited, a sophisticated and efficient group behavior is still achieved. In the last decade, distributed coordination control of a large scale swarm system (including robotic swarms) has invoked increasing interest in control and robotics community. A large group of mobile agents (e.g., mobile robots or mobile sensors), geared with computing, sensing and communication devices can serve as a platform for a variety of coordination tasks in civilian and military applications.

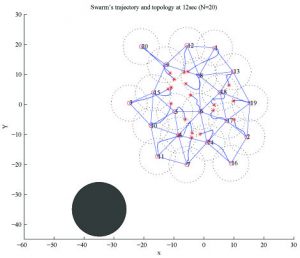

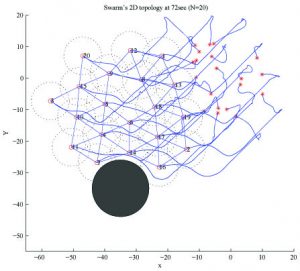

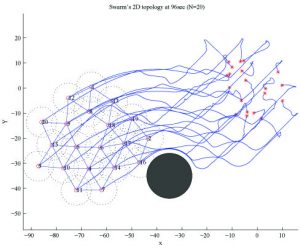

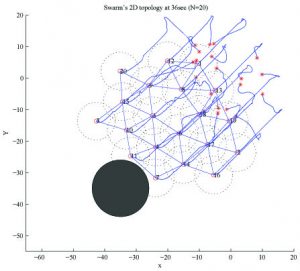

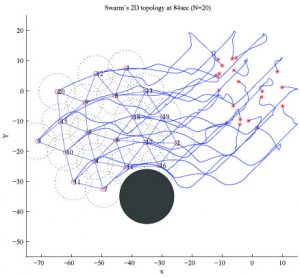

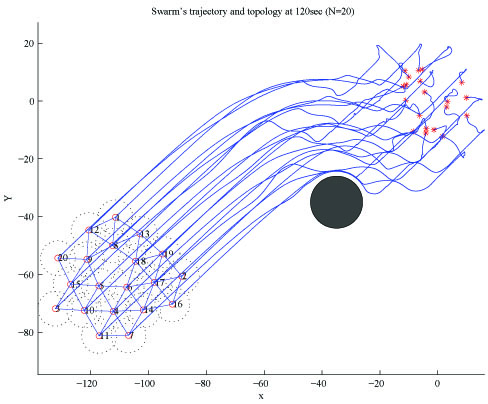

In this research, we study the underlying principles in swarms, develop a biologically inspired systematic methodology and develop a unified theory framework for designing controllers for any motion coordination of robotic swarms. We utilize algebraic graphs to model the topology of the swarm that embody the neighborhood, communication or sensing relations among the members. We consider the general situation that the swarm’s topology dynamically changes as the swarm moves. By exploiting the developed framework, we design scalable decentralized controllers for several application scenarios of coordinated motion of swarms, namely, obstacle avoidance, mobile surveillance, mobilization, rendezvous and virtual leader tracking control.

Leading Faculty: Dr. Li and Dr. Ma

Students: Tenzing Rabgyal, Victor Liang

The videos below show a swarm of 20 agents collectively avoiding an obstacle (the black disk) as shown in the images above; and a swarm of 12 agents conducting mobile surveillance along a circular trajectory, while maintaining even space among each other. In these two simulations, the agents only sense its nearest neighbors.

• Dynamic Spectrum Coordination in Cognitive Mesh Networks

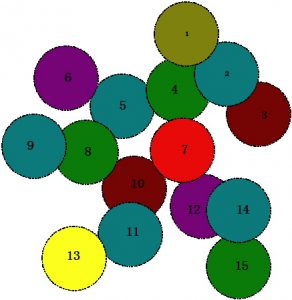

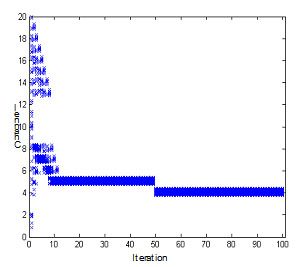

Cognitive Radios (CR) have been advanced as a technology for an opportunistic use of under-utilized radio spectrum. Cognitive mesh network is an emerging wireless mesh network that exploits CR technology to achieve reliable communication. A distributed spectrum coordination algorithm needs to be designed to make the nodes in cognitive mesh network approach to a common spectrum for establishing communication links among them. A cognitive mesh network with dynamic spectrum coordination will be greatly beneficial to a wide range of applications. For example, in a hostile battlefield, many communication channels may be interfered or jammed, which disables the communications of many nodes. A cognitive mesh network can adaptively and rapidly shift the common channel to another available channel and provide highly reliable communication.

In this project, we aim to deliver both theoretical foundation and practical solution to dynamic spectrum coordination in cognitive mesh networks. First, we proposed a biologically inspired, scalable, and mathematically provable distributed spectrum coordination algorithm. Then, we provided a general analytical study of the spectrum selection behaviors of individual nodes and the entire network. We also analytically investigated the fundamental features of the convergence time of the proposed algorithm. Besides the theoretical work, we will implement the developed algorithm on a small scale CR mesh network as prototype.

Leading Faculty: Dr. Li

• Wearable Vision Based American Sign Language Translation system

With the advancement of miniature sensing and computing devices, vision systems can be made smaller and wearable. In this project, we aim to design and develop a compact and portable vision system for hand gesture recognition, arm movement and scene/context understanding. This system will enable phonetically challenged or deaf people freely communicate with general public who even do not have any knowledge of ASL, and help them be more accepted by the society.

Leading Faculty: Dr. Xiaohai Li

Students: Jacky Yuan, Jane Lynnel Ladaban, Shuhua Song

Date: October 2019

Video of another success prototype developed by another group of students, which uses TensorFlow machine learning platform for hand gesture recognition and Microsoft Cognitive Service on Microsoft Azure for the speech feature. A small dataset of 1,200 images are created and labeled for training the ML model for 10 daily phrases.

Instructor: Dr. Xiaohai Li

Students: Abdelaziz ABDELKEFI, Carole-Anne COS, Sarah FAGES and Elisa PINAROLI (Exchange students from ECE, France).

Date: December 2021

• Vision-assisted Cooperative Tracking/Enclosure of Multiple Targets

In this research project, we design and develop scalable control algorithms to command a group of robots consisting of unmanned aerial vehicles (UAVs) and/or autonomous ground vehicles (UGVs) to track/follow, observe/monitor, and enclose/encircle several targets, stationary or moving. Each robot (also called agent) has an onboard camera that is capable of real-time image processing and feature extraction of the targets. To realize the control objective through group efforts, each robot will need to share its own information with its neighbors via wireless inter-vehicle communication. This project will include both investigation of control methods and implementation of the developed algorithms on a physical robotic network for algorithm verification and validation. This project includes the following three stages/steps:

Stage 1: Suppose there are multiple targets on the ground: either stationary or moving in the same direction with similar velocities. Suppose there is only one UGV. The UGV will enclose/track the center of all targets.

Stage 2: Suppose there are multiple targets on the ground, stationary and/or moving in different directions. Suppose there are multiple UGVs. These UGVs will be arranged into subgroups each of which focusing on one or more targets. Depending on the locations/velocities of the targets and the addition or removal of targets, the assignment of each robot and the formation pattern of each subgroup will adjust accordingly.

Stage 3: Suppose there are multiple stationary and/or moving targets on the ground. Suppose there are one or more UAVs and several UGVs. All these robots (UAVs and UGVs) will be commanded to track/observe/enclose the targets depending on the number of targets, the number of robots, the location/velocity of each target, and the communication topology between/among the robots.

Leading Faculty: Dr. Ma, Dr. Li and Dr. Xu

• City Prime: A Heteromorphism Robot

In this project we design and develop a heteromorphism robot, i.e, a “transformer robot”, which can reform its structure and reconfigure its locomotion mechanism for an adjustment corresponding to the environment/terrain and desired tasks. The demonstration videos here show that City Prime can freely transform between humanoid form and rover vehicle.

Faculty: Dr. Li

Students: Gene Nadela

Another video of City Prime.

• Miniature Quadrocopters: A Reconfigureable Intelligent Platform for 3D Urban Environment Exploration

A variety of civilian and security applications in urban areas cluttered with skyscrapers, such as crowd control, security surveillance, emergence response and disaster rescue, require a comprehensive and complete scene exploration and understanding of the environments. Traditional helicopters and ground vehicles may not be accessible to certain constrained or hostile scenes and cannot provide prompt first-hand information.

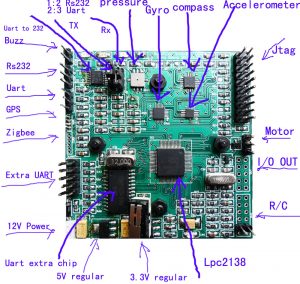

In this project, we aim to design and develop a team of compact unmanned quadrocopters that can be deployed 24/7 and accessible to constrained environments for mapping and scene understanding. We will explore the aerodynamic advantage of quadrorotors and use nontraditional motor to achieve a reliable and long mission operation by limited power source. The unique quadrorotor design will eliminate the the main chassis rotor and antitorque rotor, which will lead to a better aerodynamic maneuverability, and a high reliability in the case of rotor failure. We design and cutomize a motor driver and an autopilot system to stably control the motor’s flight, which will receive control and trajectory command from upper-level system that will include advanced sensing, decision-making and computing components.

Leading Faculty: Dr. Li and Dr. Wang

Students: Leonardo Chiang, Jane Lynnel Ladaban, Tenzing Rabgyal, Victor Liang, Daniel Huljev, George Perez